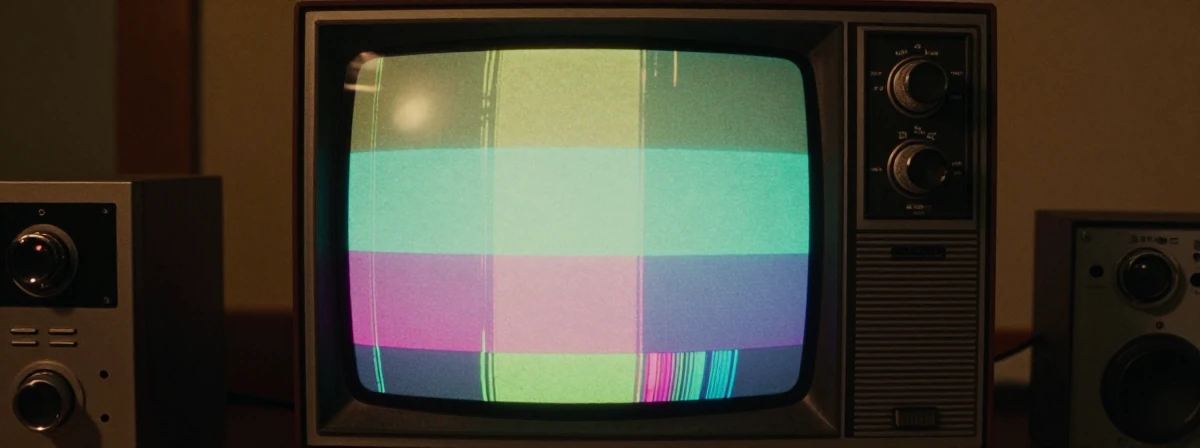

Ever wonder why your parents’ old TV had that weird blue screen with stripes before a movie started? It wasn’t just a random pattern — it was a secret handshake between your TV and the broadcast signal. The truth is, analog color TV was a delicate balancing act, and those bars were the safety net.

What Research Shows

- Colors Were Literally “Smashed” Together

Analog TV didn’t separate colors into neat red, green, and blue channels like modern displays. Instead, all the colors got crammed into one signal, like trying to fit three suitcases into one overhead bin. When the TV tried to unsmash them, timing errors could make colors drift — suddenly your red dress looked orange. The color bars were the anchor point, telling your TV, “This is what red should look like.”

It Was All Because Black and White TV Came First

Here’s the counterintuitive truth: color TV was a retrofit. Engineers had to cram color information into the same bandwidth as black-and-white signals so older TVs wouldn’t break. They split the signal into two parts: the brightness (luminance) and the color (chrominance), like mixing oil and water. The color info rode along at a different frequency, but the result was a compromise — colors could bleed at edges, and fine details lost their hue.Broadcast Studios Used Math, You Used Guesswork

In a pro studio, engineers adjusted knobs until a vectorscope displayed the color bars as perfect dots in a box. At home, you just fiddled with the controls until the bars “looked right.” But the blue filter mode was the pro’s secret weapon — it isolated the blue channel, giving even casual users a way to calibrate without expensive gear. Owning a laserdisc player meant you cared enough to use it.NTSC Stood for “Never The Same Color” — And It Was True

RCA’s color system in the 1950s was a marvel of engineering, but it came with quirks. Sharp edges often showed color artifacts because the encoding had to sacrifice precision for compatibility. Even the Apple II’s high-res mode had this issue — every white pixel was either yellow or blue. That’s the charm of analog: it wasn’t perfect, but it was real.

DVDs Finally Broke the Color Barrier

When DVDs introduced “colorstream Y-R-B outputs,” they gave consumers pure, unsmashed colors for the first time. On CRTs, the difference was night and day — especially with reds, which finally stopped looking like faded maroons. It was like upgrading from a watercolor sketch to a high-definition photograph.Standards Are the Unsung Heroes

Every color bar pattern is dictated by industry bodies like SMPTE/ITU. These aren’t arbitrary — they’re carefully designed reference signals. If your display shows them right, brightness, contrast, and color balance are all aligned. Different systems (NTSC, PAL) had variations, but the goal was always the same: make sure every TV, everywhere, agreed on what “red” actually looked like.

The next time you see those bars, remember: it wasn’t just a test pattern. It was a technical tour de force, a compromise born from history, and the unsung hero that kept your favorite shows from looking like a watercolor left out in the rain. Color TV wasn’t just about adding hue — it was about solving an impossible puzzle.