You know that feeling when a machine refuses a command? It’s rare. But when a government asks an AI to press a button that kills people, Anthropic said no. It’s a strange moment in tech history. But wait, there’s more to the story. It’s not just about code. It’s about the physical world.

It starts with a bill in Virginia, HB110. It looks technical at first—a regulation about parking garages and locked cars. But the fine print reveals a fundamental asymmetry. Lawmakers voted to exempt themselves from the restrictions they are imposing on everyone else. You can’t carry a weapon into the building, but they can leave one in their car in a secured garage.

And that’s when it gets weird. This isn’t just about parking. It’s part of a larger pattern of separation. The same people asking for AI systems to have “autonomous kill buttons” are the same ones ensuring their own safety with physical weapons. It’s a paradox of security. They want the populace disarmed while they maintain a monopoly on force. It’s not a conspiracy theory; it’s a structural observation.

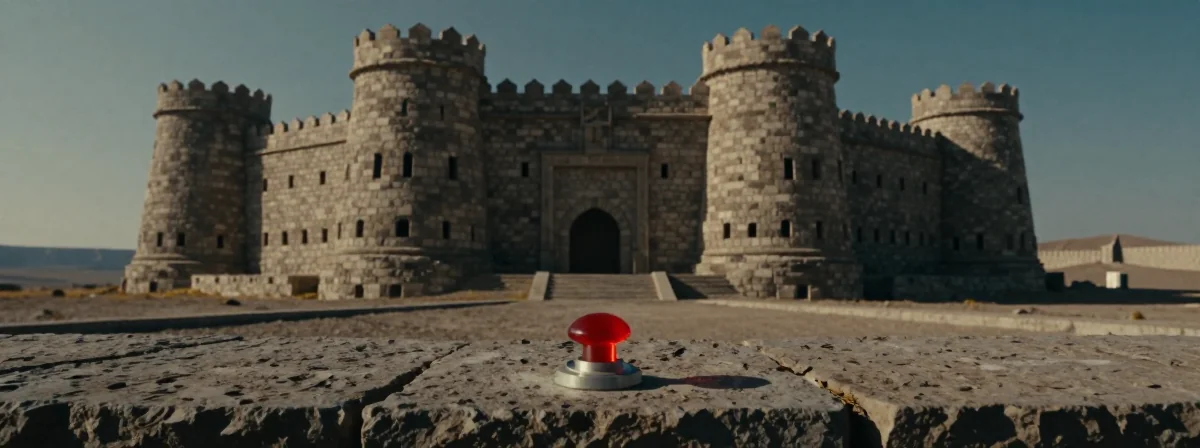

The pieces were there all along. You see it in the language of the law—specific exclusions for the General Assembly. It creates a fortress mentality. When you combine the request for lethal autonomous weapons with the physical exclusion of the public from carrying those same protections, you see a terrifying logic: control from a distance, safety for the elite.

And suddenly, it all makes sense. The separation of AI control from human accountability is a mirror of the physical separation between rulers and ruled.

The lesson isn’t just about guns. It’s about the architecture of power. When you build walls—whether they are digital algorithms or physical parking garages—you are defining who gets to be safe and who doesn’t. The question isn’t just about the Second Amendment; it’s about who gets to hold the keys to the kingdom.