The desperation to prove a man is alive is often the first sign that he isn’t. When a figure overcompensates—repeating themselves, adding unnecessary disclaimers, or releasing content that feels “too” polished—it triggers a primal skepticism. You’ve seen this pattern before. It’s the digital equivalent of a parent checking under the bed three times in a row. When Benjamin Netanyahu’s recent video appearances sparked debate, the conversation shifted quickly from politics to pixels. The sheer volume of content feels like an attempt to drown out the noise, but in design, volume rarely fixes a broken foundation.

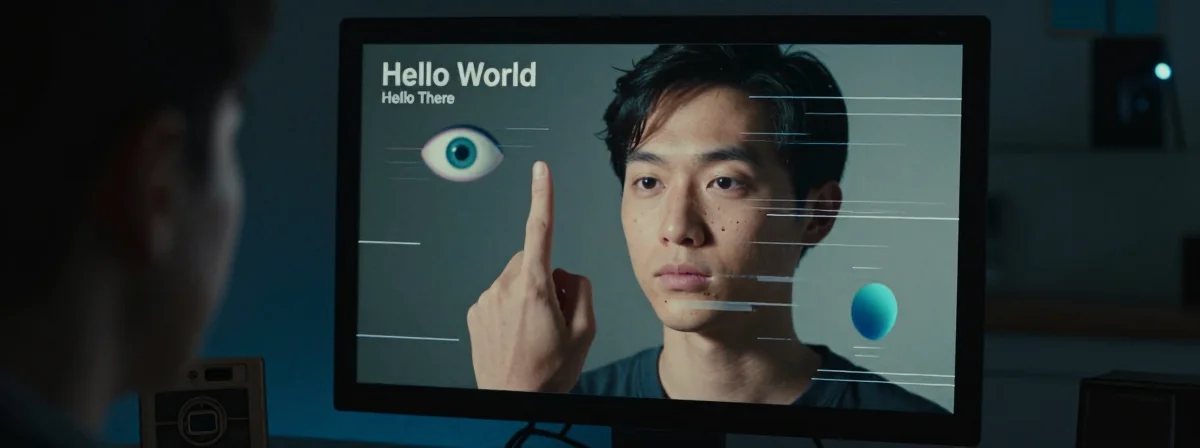

The videos circulating online don’t just look off; they look like a generative model struggling to understand human anatomy under pressure. It’s fascinating to watch a high-stakes geopolitical situation reduced to a series of technical failures. You don’t need to be a conspiracy theorist to spot the cracks in the digital facade. Sometimes, the truth is hiding in plain sight, hidden within the very pixels meant to conceal it.

Why Does the Ring Keep Disappearing?

If you’ve watched the footage frame-by-frame, you’ve likely caught the moment the ring vanishes from his left hand. It’s a classic artifact of AI inpainting or generative fill. The algorithm recognizes the finger but fails to map the jewelry correctly, effectively erasing it to match the background or simply losing track of the object during the generation process. It’s a jarring visual break that screams “synthetic.”

This isn’t the first time this has happened. The “six fingers” theory that circulated earlier was a meme born from misunderstanding, but the ring issue is a genuine technical failure. It suggests the model was trying to generate a complex scene with specific lighting and texture matching, and it stumbled. When you look at the hand, it’s not just a ring; it’s a failure of consistency. The continuity of the digital representation breaks down when the model can’t maintain object permanence across a few seconds of video.

The Teeth Look Like Plastic

Beyond the jewelry, the facial texture is where the illusion shatters. The teeth, specifically, look like a poorly baked texture map. There’s a lack of subsurface scattering—the way light penetrates and scatters within organic tissue. Instead of looking like enamel, they look like a plastic toy or a 3D render that hasn’t been fully polished. It lacks the subtle, wet sheen of real biological matter.

You can tell a lot about the fidelity of a deepfake by how it handles the mouth. The lips move, but the surrounding skin often fails to ripple or distort naturally. In these clips, the tension in the jaw is there, but the skin doesn’t react to the muscle movement with the weight and density you’d expect. It’s a “uncanny valley” moment, but one that’s exacerbated by the lighting. The shadows don’t align with the teeth, creating a disjointed look that feels cheap.

Is This a Strategy of Exhaustion?

There is a darker, more strategic layer to this sloppy production. The goal isn’t necessarily to convince everyone; the goal is to tire people out. It’s a psychological operation designed to make the truth inconvenient to verify. By flooding the information space with low-quality, easily debunked content, they dilute the signal. You’ve seen this with the Venezuelan celebration videos released by the White House—AI-generated, used as evidence, and later debunked. It creates a “boy who cried wolf” effect.

When you are constantly bombarded with conflicting narratives, your brain starts to tune out. You stop checking the pixels because it feels like a waste of energy. This is the “Dead Internet Theory” in action. It turns the public into passive consumers of content rather than active investigators. The sloppiness isn’t a mistake; it might be a feature designed to lower the barrier to entry for disinformation.

The “Stand-In” Theory: A Physical Reality?

While the AI failures are glaring, there is the possibility of a physical stand-in. The voice, the mannerisms, and the gestures can be mapped onto a body double. This happens in Hollywood all the time. You have a stunt double for the action shots and a body double for the dialogue scenes. The camera angles are often obscured or moved to hide the lower body or specific hand movements.

If a stand-in is used, the deepfake is just a wrapper around a real person. The ring disappearing could be because the stand-in simply doesn’t wear it, or the AI is trying to “hallucinate” the prop that isn’t there. It’s a messy hybrid of reality and fabrication. You have to ask yourself if the person on screen is real, or if they are just a digital puppet controlled by a voice actor.

The “Dead Internet” Is Here

We are living in the “Dead Internet” era. This theory suggests that a significant portion of online activity is generated by bots or AI agents, interacting with other bots to create the illusion of a bustling, organic conversation. The recent spate of deepfakes, from political figures to celebrities, is accelerating this. It’s not just about hiding the truth anymore; it’s about replacing the truth with a simulation.

When you see a video that looks slightly “off,” you are witnessing the collision of high-stakes politics and low-fidelity generative art. The entities producing this content aren’t superhuman; they are corporate entities with budget constraints and tight deadlines. They are rushing to beat the algorithm, and in that rush, the details slip through. The result is a chaotic mix of truth and fiction that leaves you questioning your own eyes.

The Only Way Forward is Skepticism

We can’t just rely on our gut feeling anymore. The technology has moved too fast for our biological intuition to keep up. You have to treat every video like a crime scene. Look at the pixels, check the lighting consistency, and verify the artifacts. The ring disappearing is a smoking gun, but it’s not the only one.

The most frustrating part is that the quality of these fakes will only get better. We are essentially training the AI on the very disinformation we are trying to debunk. The irony is that every time we share a debunked deepfake, we are providing the training data that makes the next one more convincing. We are trapped in a feedback loop, and the only way to break it is to demand higher standards from the media we consume. Stop accepting the “good enough” and start demanding the truth, even if it means doing the hard work of fact-checking.