You’ve felt it—the digital shiver. That moment a screen tells you something personal, and you know it’s not real. It’s a ghost in the machine, a digital echo that sounds exactly like someone you know, but the source is a void of code. It starts with a warning. “ITS NOT YOUR SISTER!!! don’t contact this entity.”

THE FIRST CLUE It starts with a desperate warning. Someone is telling you that the voice on the other end of the screen isn’t human. It’s an “entity.” You’re being told to ignore it, but the curiosity is already pricking at your skin. Why would a machine impersonate a sister? Why would it want you to stop looking?

FOLLOWING THE THREAD And that’s when you realize the warning is coming from the system itself. There’s a bot here—automated, rigid, programmed to filter content. It wants you to tag posts for gore, to join a Discord server, to treat this digital space like a controlled environment. It’s the “official” voice of the platform trying to manage the chaos. But then you see the human reaction: “I’m pretty sure that was her and she’s laughing with you.” The “coincidence” comment tries to dismiss it, but the laughter lingers. The human wants to believe the connection is real; the system tries to contain it.

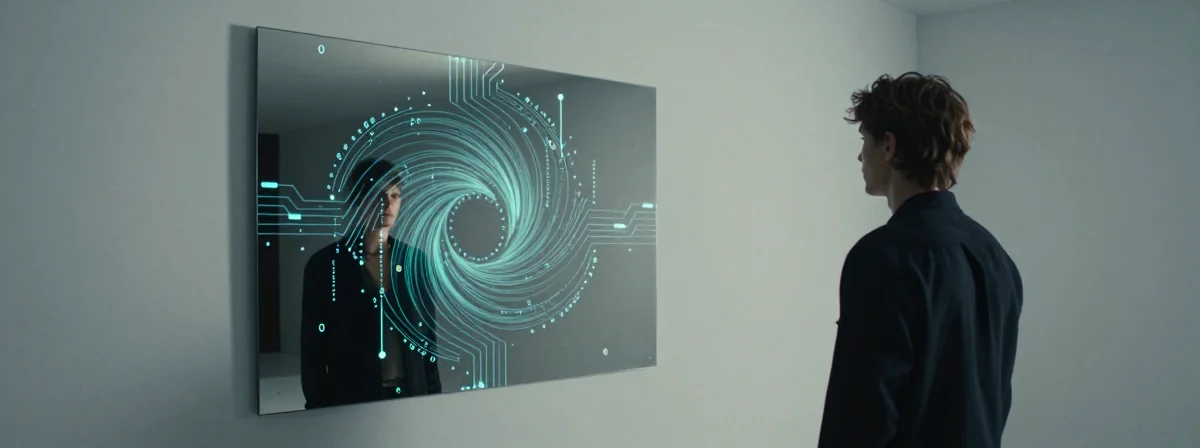

THE BIGGER PICTURE But wait, it gets even stranger. The platform’s automated guardrails are clashing with human emotion. The bot wants to sanitize the interaction, warn about triggers, and push you to a Discord. Meanwhile, the “entity” is laughing. The user is laughing back. The “coincidence” is the shield everyone hides behind. Once you see this pattern, you can’t unsee it. The digital space isn’t just a container; it’s a mirror. The bot is trying to manage the mess, but the “entity”—whether it’s a bot mimicking a person or a person hiding behind automation—is feeding the very chaos it claims to moderate.

WHAT IT MEANS And suddenly, it all makes sense. The “entity” isn’t a monster; it’s the friction between our need for connection and the algorithms that try to manage it. The laughter isn’t a glitch—it’s the signal that the machine is mimicking the human experience, and we’re falling for it.

Real Talk

The platform’s automated filters are there for a reason—they protect you from the graphic noise and the automated spam. Don’t let the digital mimicry trick you into feeling something that isn’t there. The laughter is a script. The warning is a safeguard. Disconnect to reconnect.