What if I told you that the very technology designed to connect us is being used to systematically unravel our sense of reality? It’s happening right before our eyes, embedded in the videos we scroll past every day. You might think you’re just seeing another post, another clip, but some of these aren’t just videos – they’re intricate puzzles, constructed with flaws on purpose. It all makes sense now! The inconsistencies, the glitches, the things that just don’t add up – they aren’t accidental. Think about it. When you get too close, when you start questioning, the system seems to adjust, doesn’t it? Like the ratio changes, the comments get hidden. It’s not paranoia; it’s observation. This isn’t just about one suspicious video; it’s about recognizing a pattern, a deliberate ‘slip up’ in their control mechanisms that, if you know where to look, exposes the whole operation.

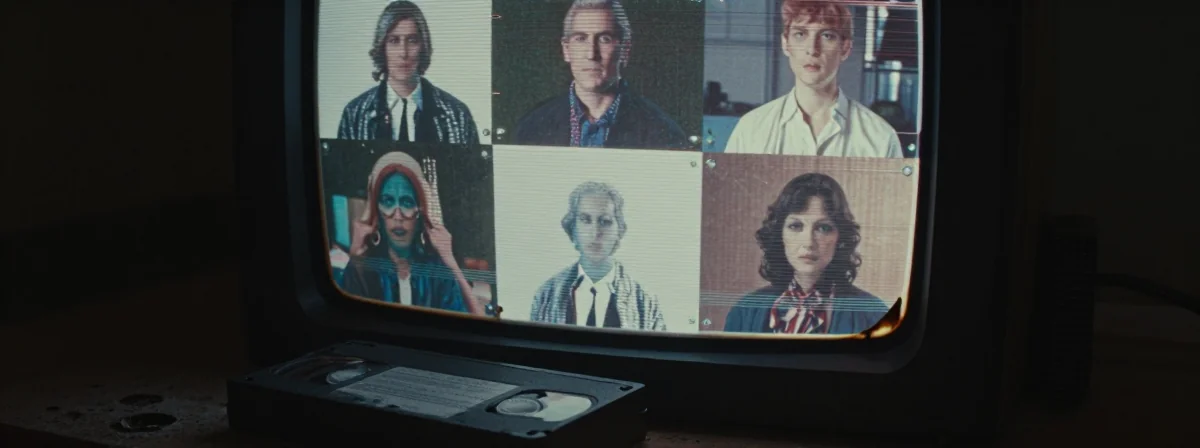

This awareness started with noticing simple things – a ring vanishing from a finger in a TikTok, a car appearing undamaged after apparent gunfire, colors that seem too vibrant, ratios that shift when scrutiny increases. It’s like they’re testing the waters, seeing how closely we’re watching. Remember those intense events, like Oct 7th? The flood of footage was overwhelming, and yet, anomalies surfaced. A white car rolling away empty, no damage, no evidence of a struggle despite the claims – where’s the logic in that? It wasn’t just one person noticing; it was many. And when you see it yourself, like checking that official TikTok link and watching the ring disappear right before your eyes, it’s impossible to deny. People in the know, people working with media, they see it too. It’s a shared secret, a code hidden in plain sight, telling us that what we’re seeing might be carefully crafted fiction. This isn’t about falling for a theory; it’s about developing the critical lens to see the manipulation happening constantly.

The realization is stunning: these aren’t glitches; they might be deliberate breadcrumbs. It’s like they’re showing us the seams, maybe to gauge our reaction, maybe to boast about their ability to control the narrative even when it looks fake. What if I told you that every time we point out one of these anomalies, it’s not just us getting closer to the truth, but also them learning how to better hide it next time? It’s a constant game of cat and mouse, and the cat seems to be leaving clues. They didn’t ‘slip up’; they placed those clues there. Maybe it’s to keep us guessing, maybe to make us question everything, maybe to distract from even bigger operations. But the pattern is undeniable. The more you look, the more you see. It’s not just one video or one event; it’s a methodology. A way to insert false narratives, protect certain figures, or simply demonstrate power by controlling the flow of visual information. The ‘slip ups’ are the keys to understanding the game.

Why Do These ‘Slip Ups’ Happen? It’s Not an Accident!

Forget coincidences. When you see consistent patterns across different videos – from political figures to breaking news events – pointing fingers at random glitches just doesn’t cut it anymore. It all makes sense now! These ‘slip ups’ are likely intentional oversights, placed there for a reason. Think about it: if you’re trying to manipulate public perception on a massive scale, you can’t make it look too perfect. There has to be a way for the initiated to see through the facade. It’s like a magician revealing a tiny part of the trick – not enough to ruin the whole act, but enough to make you question it if you’re paying close attention. These anomalies – the disappearing rings, the mismatched backgrounds, the unnatural movements, the impossible physics like cars rolling away undamaged – serve as those tiny reveals. They’re not mistakes; they’re signals. Signals that the video has been tampered with, that it’s not what it purports to be. It’s a high-stakes game of psychological warfare, and these ‘slip ups’ are their way of showing off their control, or perhaps testing our defenses.

Consider the example of the ring vanishing on that official TikTok. It wasn’t a single frame error; it happened multiple times, noticeable to anyone who paused and looked. Why program that specific error? It doesn’t make technical sense for a simple edit. It makes sense, however, if the goal is to plant a seed of doubt. It’s like a digital watermark, but instead of authenticating, it’s flagging the content as potentially synthetic. And it’s not just one person noticing; the comments on the real page confirmed it. This isn’t isolated; it’s a tactic. They might be using AI that isn’t quite perfect yet, or perhaps they故意 leave these flaws. It’s a way to manage the narrative: release a fake video, wait for the initial reaction, then let the ‘slip ups’ surface, creating confusion and division about what’s real. It keeps us arguing about the details while the broader manipulation continues unchecked. These aren’t glitches; they’re strategic placements, tiny cracks in the dam of their carefully constructed reality.

The Pattern of Protection and Deception

It’s not just about isolated videos; there’s a larger pattern connecting these anomalies to broader geopolitical events and figures. Think about the intense focus on certain leaders, like Netanyahu. The whispers about faked videos, even potentially faked deaths or injuries, aren’t just wild speculation anymore. They’re part of a discernible strategy. What if I told you that these AI-generated videos serve multiple purposes? One is distraction – throw enough confusing visual ’evidence’ out there, and people stop focusing on the actual actions or policies. Another is protection. If a leader is under fire, why not release a video showing them ‘fine’ or ‘active,’ even if it’s clearly AI? It’s a digital shield. Remember the Epstein case? The official narrative was questioned by many, and the idea that powerful figures would go to extreme lengths to protect their own is no longer far-fetched. If they fabricated evidence then, why wouldn’t they fabricate video evidence now? It’s a natural evolution of their toolkit.

This pattern suggests a sophisticated operation where AI isn’t just a tool for minor edits; it’s becoming a primary instrument for narrative control. They aren’t just ‘protecting’ individuals; they’re actively shaping reality. The ‘slip ups’ we notice are like pressure valves in this system. They allow the illusion to persist for the majority while giving the observant few a glimpse behind the curtain. It’s a way to manage dissent and maintain control without direct confrontation. They move heaven and earth to protect their interests, and now, they have technology that allows them to do so visually and convincingly (except for those pesky details). The videos showing leaders supposedly reacting to events, the ‘feel-good’ videos that seem out of character – these could all be part of this larger strategy. It’s not about one video being fake; it’s about recognizing the type of video being fake and understanding the potential motive behind its creation. It’s about seeing the forest for the trees, and understanding that the trees are sometimes digital constructs.

The Evolution of AI and Our Ability to Spot It

AI isn’t static, and neither is our ability to detect its misuse. What started with crude attempts, like the unsettling Will Smith eating spaghetti video, has evolved into much more sophisticated manipulations. But the core principle remains the same: find the inconsistencies. The colors being ’too vibrant,’ the unnatural warping of hands, the impossible physics – these are tell-tale signs we’re learning to recognize. It’s a constant arms race. As AI gets better at mimicking reality, our critical thinking skills need to get sharper. Think about it: the person who noticed the ring vanishing wasn’t using special software; they were just paying attention. That’s the key. We don’t need advanced tech to spot the fakes; we need awareness and a willingness to question what’s presented as fact. It all makes sense now! The ‘slip ups’ are becoming more subtle, yes, but they’re still there if you know where to look. The community of skeptics, the people sharing these observations, they’re building a collective knowledge base of AI tells.

This evolution means we can’t be complacent. Just because we spotted one anomaly doesn’t mean we’ve mastered it all. The AI developers are learning from our observations, refining their algorithms to avoid those specific mistakes. That’s why maintaining skepticism is crucial. As one observer noted, the conspiracy community itself has been fooled by AI recently, highlighting how easy it is to be deceived. We have to be vigilant, not just about confirming our existing beliefs, but about genuinely questioning everything. Confirmation bias is their ally; critical, open-eyed observation is our weapon. The fact that colors might look ‘off’ in AI videos, or that movements seem slightly robotic, these are the new frontiers of detection. It requires us to become more media literate, more attuned to the subtle cues that something isn’t quite right. It’s a skill, and like any skill, it improves with practice. Every ‘slip up’ we notice is a learning opportunity, not just for us, but potentially for them too, which is why sharing these findings is so important.

The Deliberate Distraction: Keeping Us Guessing and Divided

Could the sheer volume of these suspicious videos, many focusing on high-profile figures and controversial events, be a deliberate tactic to distract us from more critical issues? What if I told you that these AI-generated narratives are designed not just to deceive, but to occupy our mental space? Think about it: when a major story breaks about potential war crimes, election fraud, or systemic abuses, suddenly a highly questionable video surfaces, generating intense debate and confusion. It’s like a digital smoke screen. The example given about focusing on a potential cover-up involving Trump, while crucial news about DOJ actions gets sidelined – this fits the pattern perfectly. By flooding the zone with confusing, potentially fake visual evidence related to already contentious topics, they keep us arguing about the authenticity of the video rather than the substance of the underlying issue.

This strategy serves multiple purposes. It creates chaos and confusion, making it harder to build a coherent narrative or demand accountability. It exploits existing divisions, pitting those who believe the video against those who don’t, often along pre-existing political or ideological lines. It also demonstrates power – the ability to insert synthetic evidence into the public discourse and see how it’s received. The ‘slip ups’ in these distraction videos might be more pronounced because the goal isn’t necessarily long-term believability, but immediate engagement and diversion. They want us talking about the fake video, not the real story. It’s a sophisticated form of information warfare, using our own desire for visual proof against us. Recognizing this pattern is key. It’s not enough to just spot the AI; we need to understand the intent behind its deployment. Are we being distracted from something bigger? Is this video part of a coordinated effort to shift the conversation away from accountability? Staying skeptical about everything, especially when it seems designed to provoke a strong reaction, is our best defense.

The Ultimate Goal: Undermining Trust Itself

Beyond protecting individuals or distracting from specific events, could the ultimate goal of these deliberate ‘slip ups’ and AI manipulations be even more insidious? What if I told you it’s about eroding our fundamental trust in reality itself? Think about the long-term effect of constantly being fed potentially fake videos, only to have the inconsistencies revealed later. It creates a state of perpetual uncertainty. If you can’t trust what you see on your screen, what can you trust? This constant undermining of visual evidence could be designed to make us疑神疑鬼, questioning not just specific videos, but the very nature of information and truth in the digital age. It’s like a psychological operation aimed at making us all a little crazy, unable to discern fact from fiction, reality from simulation.

Epstein, Netanyahu, Oct 7th – these aren’t just isolated incidents; they’re part of a broader campaign to demonstrate control over the narrative, regardless of how absurd it looks. The idea that they’re “alive and perfectly fine in their underground bunkers” might be extreme speculation, but the underlying principle – that they have the means and motive to manipulate information on a grand scale – holds weight. By showing us these ‘flawed’ videos, they might be trying to normalize the idea that reality is malleable, that truth is subjective, and that our own senses and observations are unreliable. It’s a way to make us complicit in our own confusion. The colors, the glitches, the shifting ratios – they all contribute to this overarching goal of making us question everything, ultimately rendering us powerless to act against injustice because we can’t agree on what’s even happening. It’s a war on perception itself, and the ‘slip ups’ are the weapons they use to make us doubt our own minds.

It’s All Connected: The Web of Deception Weaves Wider

After looking at all these pieces – the disappearing rings, the undamaged cars, the vibrant but fake colors, the shifting ratios, the potential for distraction and protection – it becomes impossible to view them as isolated incidents. It all makes sense now! They are threads in a much larger web of digital deception. This web isn’t just about faking a single video or protecting a single person; it’s about controlling the information landscape. The ‘slip ups’ are the joints where the web shows its structure. They are the moments of vulnerability that reveal the underlying manipulation. Think about it: from political propaganda to social media trends, from historical ’evidence’ to breaking news, the potential for AI manipulation is vast, and the signs are becoming more apparent.

The key takeaway isn’t just that some videos are fake – it’s that the methodology of using AI, placing deliberate ‘slip ups,’ and then observing the reaction is a pattern we need to recognize everywhere. It’s about understanding that the people behind this aren’t just clumsy hackers; they are strategic operators using cutting-edge technology to shape our reality. They move heaven and earth to protect their interests, and now, they have the tools to do so in ways we’re only beginning to comprehend. The next time you see a video that seems slightly ‘off,’ that generates intense but confusing debate, or that feels like it’s trying too hard to tell you something specific – pause. Look closer. Check the details. Ask the hard questions. Don’t be discouraged if the ratio seems manipulated or comments are hidden; that might be exactly the reaction they want. Your skepticism, your critical eye, your willingness to connect the dots – that is the ultimate countermeasure. The ‘slip up’ isn’t a mistake; it’s an invitation to see the truth, if you’re brave enough to look. And when you do, you’ll see the web for what it is, and understand just how much is being hidden in plain sight.